March 21, 2020

Updating A*

Different Agent Types and Different Architectural Elements

Adding Architectural Elements of Influence to A* Pathfinding

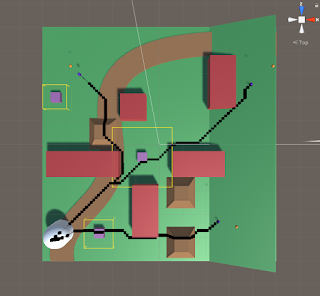

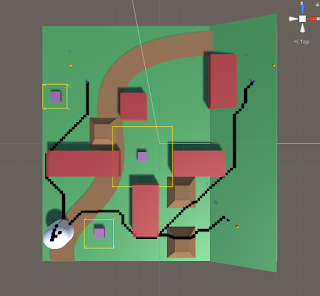

We want to be able to add various architectural elements to an environment and use them to influence the pathing of AI agents using A* pathfinding. Along with this, we want various agents to respond differently to the same architectural stimulus (i.e. some agents should like moving near windows more, while others should prefer to stay away from windows). This required the addition of some architectural data to both the agents and the nodes within the A* grid.

Implementing Architectural Elements

I have started to implement my approach outlined in my blog post from yesterday to create the foundation for this architectural pathing addition. I have started with just a single data point to work out the feature by adding a “window” value to both the agents and the nodes of the grid. The Agent window measures their affinity for window spaces (higher value means they are more likely to move near/towards windows) and the Node window values represents how much that space is influenced by windows (being closer to a window or near several windows will raise this value, and a higher value means it is more window influenced, and more appealing to high window affinity agents).

I modified the existing Influence class into an abstract base class that I will use as the basis for these architectural influence objects. This allows me to pass on traits consistent with all types of influence objects (such as dimensions for area of influence) as well as methods they will all need (such as one which determines how they apply influence to the area around them). Related to this, I was able to move the influence method out of the AGrid class, which also prepares the entire grid, so this was good for trimming that class down.

Moving the influence spreading methods to the Influence objects themselves was also crucial because it allows different objects to apply influence differently and more uniquely. Some objects may apply to simple rectangular shapes, where others may project out in a cone, or even use ray casts to determine their area of influence. To help these Influence objects influence the proper area, I pass them a reference to the grid as well as their starting position (in terms of nodes on the grid). This is helpful to start in the AGrid class, which currently holds a reference to all the Influence objects in the scene, as it uses their transform information to determine which node they are centered on (which is passed on to the Influence object itself as stated to know where it is located in the grid).

Finally, I had to make sure a reference to the individual agents was getting passed through the entire pathfinding process so it could use that information to provide the possibly unique path for the different types of agents. This just meant I needed to add references to the UnitSimple class to pass through as a parameter from UnitSimple itself, to the PathRequestManagerSimple, and eventually to the PathfindingHeapSimple, which finally uses that data to modify how it calculates cost for creating the paths.

Investigating How to Apply Architectural Influence as Cost

Figuring out how to apply this combination of architectural value within the nodes themselves and the architectural affinities of the agent in a mathematical sense that makes sense has proven difficult, and I am still investigating a clean way to implement this. Because of the nature of how the costs work with this pathfinding system, subtracting values from costs in a way that is not very controlled can be risky and prone to breaking, as negative values can provide some very strange results.

To help determine a system to implement this, I broke it down as simply as I could. The goal is to use the two values, agent affinity and node architectural value, together to produce a architectural cost to add on top of the normal distance cost of a node. Looking at this, I created a set of constraints to guide the process, and eventually a basic system to follow for now to give results at least in the proper realm of what we are looking for.

Architectural Cost Constraint Breakdown

Early on I thought it would be helpful to have a MAX affinity value possible and a MAX architectural value possible since I thought I would either need to subtract from these values (so bigger numbers led to lower additional cost) or divide with these numbers (to provide some proportionality value within the cost calculation). These arbitrary values and their concept were then used in coming up with some of the initial constraints I delivered. These constraints were (the results are the architectural cost, added to total node cost):

- MAX Affinity with MAX Architectural Value = 0

- MIN Affinity with MAX Architectural Value = MAX Architectural Cost

- Average Affinity with ANY Architectural Value = DEFAULT

- ANY Affinity with 0 Architectural Value = DEFAULT

The ideas behind these constraints were: having the highest affinity on the most architectural value possible on a node should produce the lowest architectural cost possible (0 in this case), the lowest affinity (or greatest aversion) with the highest architectural value on a node should produce the highest architectural cost possible, having an average affinity means the agent is neatural towards that architectural element so no value has any major influence on the cost, and finally if a node has 0 architectural value for a feature the agent takes on no cost regardless of their affinity towards it since it is not present in any way for that node.

Further Breakdown of Interaction Between Agent Affinity and Architectural Node Value

While a step in the right direction, I still was not sure exactly how I wanted to numerically determine the architectural cost. To further aid myself, I settled on a system that made a decent amount of sense with how I wanted these factors to interact. The tricky part is that it is the interaction between these two factors that influences the resulting cost on the node, it is not simply more of one or more of the other reduces cost on their own.

They concept I settled on was that the agent’s affinity value would be used to calculate a cost/architectural value factor (using basic factor labeling, multiplying this by architectural value would result in some cost value). I decided having a MAX affinity value possible made sense, and could help with this approach. Affinities below the average (value in the middle) of the MIN and MAX affinities would increase the cost of the node, where values above the average would reduce the cost of the node. The architectural value would then just determine how much this rate influenced the cost of the node. This correctly hit the points that agents with very low affinities should really want to avoid nodes with a lot of that architectural element, and those with very high affinities should really prefer nodes with that architectural element.

I feel like at this point I have most of the constraining factors and rules in place, I just need to bring them all together in a relatively clean calculation. My first attempt does not need to be the perfect solution, so I can modify it later if certain mathematical approaches work better for this situation, I just need to make sure it encompasses the general ideas and goals we need from the system for now.

Next Steps

I mostly just need to bring everything together to get a calculation that satisfies our basic rules and goals for now. As stated, I can modify it if better mathematical approaches are found, but we just need something to test for now. I am investigating basic math formulas to see which curves look like they would fit the preferred results, so I will at least record those for now to test in the future (just simple squared, cubed, root graphs). Finally, I just need to fully implement the system so I can test the bare basics to make sure the different agents actually travel differently with the window objects in the world and that they are not reducing the processing time to a crawl.