February 19, 2021

Pixel Art

Color Palettes with Aseprite

Title:

Pixel Art Class – Palettes & Colour

By:

AdamCYounis

Youtube – Tutorial

Description:

Learning about creating your own pixel art color palettes with the help of Aseprite.

Overview

This video covers the creation of color palettes for use in pixel art projects at an introductory level. The user is well versed in Aseprite, so they do so through this software and show some tricks within it to help in the palette creating process. They also go over some general color choosing concepts and their general process for creating palettes to give you a nice starting point.

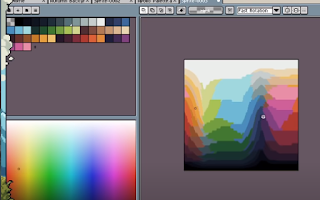

Reviewing a Palette Against the Spectrum

To check how a chosen palette matches up against an entire section of a color spectrum, they copy and paste a full slice of the color spectrum (slice because the color spectrum is 3D ‘i.e. R, G, and B or H, S, and V’ so you can only view a particular 2D slice at any given time) into a new image in Aseprite. Then from here, they select their color palette and check the Indexed Color Mode in Aseprite. This will break down the full spectrum shown into which colors of the palette most closely resemble each and every part of the spectrum.

This is very effective in seeing how a given color palette covers a full spectrum to see which types of colors it can represent in more detail and which will be more generalized. This means it can also help you guide your color selection process while building a palette because you can see areas it is deficient in that you may want to add more options to.

Found through:

Sprite -> Color Mode -> Indexed

Aseprite Indexed Color Spectrum Compared to Palette Example (from video tutorial)

Creating a Color Palette

They start with selecting an initial saturation level.

This helps set the tone for all your colors and it is generally uncommon to see images with stark contrasts in saturation, making this a good starting point. They tend to choose a saturation around the 50 – 60% area since that’s just in the middle.

They then choose a starting color in the greens or blues and mostly just look for a color they think looks nice. Starting with green, they generally look for something that works well for grass since they are looking for practical applications as a game developer most of the time. Once they find a good starting color, they add that to the palette.

Creating Color Ramps

Next they look to build a color ramp with that color. They simply shift the color somewhat in hue and somewhat in lightness or value. For example with the green, they will move up towards a lighter green as well as left towards the yellow in a single shift. If they move down, they move towards the darker green as well as right towards the blue. They choose more colors than they think they will need, so they can see more color options during the building process and they can remove excess colors later.

As they note later, if you are working on a yellow hue, moving towards yellow does not particularly work. They reference using your specific context and feel you are going for to help you determine how you will shift colors. Their example was that they had their yellow shift towards brown as it got darker to help with getting woody and leathery tones. They also had a red that shifted towards yellow as it got lighter (which would be to the ‘right’ as opposed to the ‘left’ the green is using).

You can see the color you select in the palette on your chosen color spectrum. This can be used to see how well your colors line up with your original designated color. If they do not line up well, you can use this to help you determine how to tweak individual colors in the ramp to work better for you.

After this, they start to throw the colors on the canvas to see how they look individually as well as with each other. A helpful shortcut for this is the square bracket keys ([,]) as they let you move between color indices. They identify which colors they like and which ones work well with those and which don’t. They then modify the colors they don’t like or that don’t match well with their ideal colors to fall in line. Afterwards, they then identify any colors in the ramp they do not need or that seem extraneous and remove them to simplify the palette.

They do not tend to try and exactly make precise mathematical jumps from color to color or shade to shade. While it can help as a general starting point, they suggest just going by what your eyes tell you when they think it “looks good”. The example they show was that a larger jump between the brighter colors and much smaller jumps between the darker colors looked pretty nice for their color ramp.

Another option to keep in mind is changing the values of all the colors in a ramp at once. You can select the entire ramp, or portions of the ramp, and alter their hue, saturation, or value/lightness all together. Hue will change the color, so usually only slight variations will work their, but some of the other ones can support significant differences to provide different feels or tones.

Keyboard Shortcuts:

Move Between Color Indices = [ and ]

Bridging Ramps

They tend to think of their overall color palette as converging at the ends of lightness and darkness. So as they get closer to white, the colors all get much more similar, and likewise as they get closer to black. The colors then in effect, widen and spread a larger range closer to the middling values between white and black.

This is not something that needs to be done everytime by any means, this is just one approach they tried. Again looking at the color bridge examples, this approach effectively makes the top and bottom (the whites and blacks) a color bridge spanning across all your various hues.

To help this process, once a ramp is in place, they looked to increase the saturation of the colors in the upper-middle-third of the colors, and desaturate all the other colors. This helps the higher and lower colors “bleed into each other” better.

They then move to their next hue (blue in this case) and begin the process of building another color ramp. They can leave previous colors and ramps on the canvas to help provide context for the current color ramp they are structuring. As they place the new colors on the canvas to test them, they also use Aseprite’s shade tool to quickly check all the different colors through their ramp.

Summary

To recap their process shown in the tutorial:

They start by selecting a saturation level to use across the entire palette (usually in the 50 – 60% range). They start with a main focus color and just try to find a good looking color to represent it to serve as a starting point. They then create a color ramp for this color by moving up and towards yellow to provide warmth as they brighten their colors, and down and towards blue to provide coolness as they darken the colors. Some colors will require moving towards other colors, so choose what fits your overall feel and needs. Creating a linear color ramp (a straight line through the HSV spectrum image) can be a decent starting point, but they do not fear strongly breaking away from this to get the right tones. Also choose extra colors, as later when colors seem too close they are easily removed to simplify the palette.

They then throw the colors from that ramp all over the canvas to see how they look individually as well as with each other. Here they do further tweaking until it looks good. These processes are then repeated with each of the next colors, and they can continually be added to the canvas to see how they work in the context of your other selected colors as well.

To create one type of bridging, they look to bridge everything at the brightest and darkest levels. To achieve this they increase the saturation on the upper-part of the middle third of all their color ramps, and desaturate everything else. This widens the color range of those middling colors, while brinding all the more extreme colors closer together so they can blend together easily.

Finally, the entire color palette can be cross examined with the HSV spectrum to see how your colors cover the full spectrum. This is done in Aseprite by taking a quick snapshot of the HSV spectrum, opening it as an image, and using the “Indexed” color mode with the palette selected. From here further tweaks can be made if you feel a color is under/over represented in the amount of detail and differentiation it provides.

via Blogger http://stevelilleyschool.blogspot.com/2021/02/designing-pixel-art-color-palettes-in.html